In Part 1 of this series, I discussed building a proper FreeNAS server and prepared a Dell PERC H200 by flashing it to an LSI 9211-8i in IT mode. But while I was looking around for suitable hardware for the build, I decided to try something that I’ve wanted to do for a long time – PCI passthrough.

This would give me an opportunity to tinker with vt-d passthrough and put my freshly flashed Dell PERC H200 through its paces.

Why Not VMDK Disks?

As mentioned in Part 1 of this series, FreeNAS makes use of ZFS, which is much more than just a filesystem. It combines the functionality of a logical volume manager and an advanced filesystem providing a whole slew of features including redundancy and data integrity. For it to do this effectively – and safely – ZFS needs direct access to SATA or SAS drives. We want ZFS to manage all aspects of the drives and the storage pool and should remove all layers of abstraction between the FreeNAS OS and the drives themselves.

As you probably know, FreeNAS works well enough as a virtual machine for lab purposes. After all, ignoring what’s in between, ones and zeros still make it to from FreeNAS to the disks. That said, using a virtual SCSI adapter and VMDK disks certainly does not qualify as ‘direct access’. In fact, the data path would be packed with layers of abstraction and would look something like this:

Physical Disk > < SATA/SAS HBA > < ESXi Hypervisor HBA driver > < VMFS 5 Filesystem > < VMDK virtual disk > < Virtual SCSI Adapter > < FreeNAS SCSI driver > < FreeNAS/FreeBSD

In contrast, a physical FreeNAS server would look more like:

Physical Disk > < SATA/SAS HBA > < FreeNAS HBA driver > < FreeNAS/FreeBSD

What About RDMs?

More advanced users may try to overcome this to some degree by using local RDMs (Raw device mappings). RDMs allow you to provide exclusive access of a physical LUN – or disk – to a VM. Unfortunately, RDMs aren’t officially supported on local disks and are intended for use with remote FC/iSCSI LUNs.

Physical Disk > < SATA/SAS HBA > < ESXi Hypervisor HBA driver > < Virtual SCSI Adapter > < Guest SCSI driver > < FreeNAS/FreeBSD

As seen above, RDMs bypass the VMFS filesystem and VMDK virtual disks completely, but the VM still makes use of an emulated SCSI adapter of one type or another. Even with ‘physical mode’ RDMs, this extra virtual SCSI adapter still provides some degree of abstraction and hinders direct access to the disks. This is certainly better, but it’s far from ideal.

PCI Passthrough – As good as it gets for a FreeNAS VM.

PCI Passthrough – or VMDirectPath I/O as VMware calls it – is not at all a new feature. It was originally introduced back in vSphere 4.0 after Intel and AMD introduced the necessary IOMMU processor extensions to make this possible. For passthrough to work, you’ll need an Intel processor supporting VT-d or an AMD processor supporting AMD-Vi as well as a motherboard that can support this feature.

In a nutshell, PCI passthrough allows you to give a virtual machine direct access to a PCI device on the host. And when I say direct, I mean direct – the guest OS communicates with the PCI device via IOMMU and the hypervisor completely ignores the card.

So when it comes to FreeNAS, we can eliminate the virtual SCSI adapter and allow the OS exclusive control of the LSI 9211-8i adapter. You’ll find that from the perspective of FreeNAS/FreeBSD, there is no difference between a passed through SAS adapter and what you’d see in a physical box – both will detect the card at a specific PCI address and load the appropriate ‘mps’ driver.

Physical Disk > < SATA/SAS HBA > | VT-d/AMD-Vi | < FreeNAS HBA driver > < FreeNAS/FreeBSD

Configuring PCI Passthrough

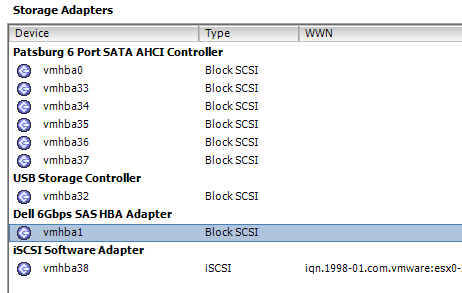

After booting up my primary management host with the Dell PERC H200 card installed, I confirmed that the HBA driver was loaded by ESXi and saw that it was indeed showing up as a storage adapter that could be used:

This is not what we want because we need to make this adapter available for passthrough and that ESXi ignores it.

So without further ado, let’s make this adapter available for passthrough. To do this from the vSphere Web Client, select your host, click the Manage Tab, and then PCI Devices under the Hardware Section.

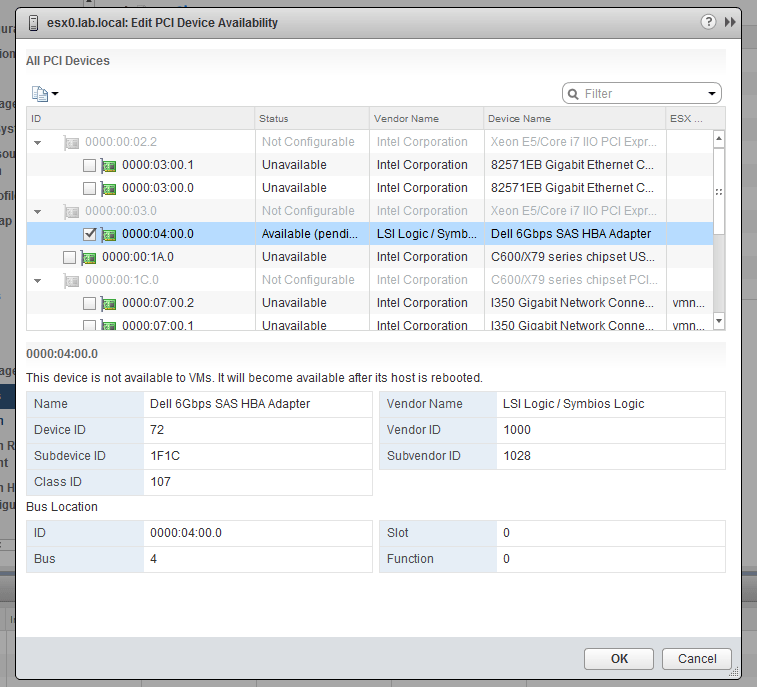

If you haven’t passed through any devices yet, the list will be empty and you can click the little ‘pencil’ icon above to view eligible devices.

In my case, I can see several eligible devices, including the Dell SAS HBA, several network adapters and the onboard AHCI storage controller. After selecting the Dell SAS HBA and clicking OK, I could see that the host was now in a ‘Reboot Required’ status.

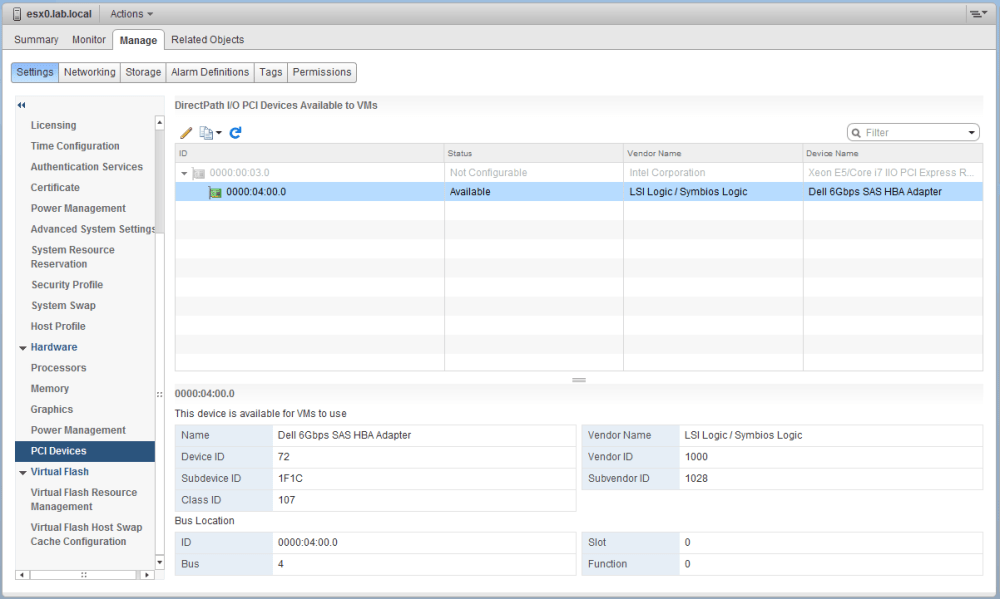

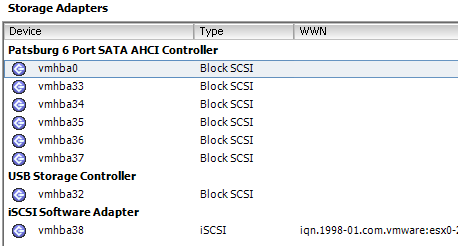

After rebooting ESXi, I was happy to see that the adapter is now displayed as available for passthrough and no longer loaded as an HBA available to ESXi.

Building the FreeNAS VM

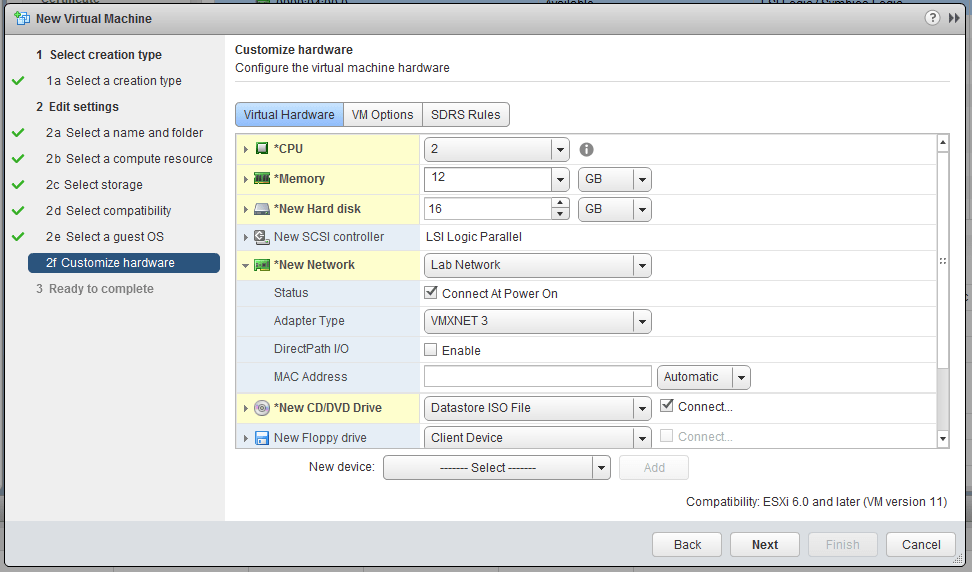

I’m not going to go into too much detail about building the FreeNAS VM, but below are some recommendations:

Guest OS type: Other, FreeBSD 64-bit

CPUs: 2x vCPUs

Memory: 12GB (a minimum of 8GB is required)

Hard Disk: 16GB (for the FreeNAS OS boot device, a minimum of 8GB is required)

New SCSI controller: LSI Logic Parallel (for the FreeNAS boot device only)

Network adapter type: VMXNET3 (E1000 is default)

CD/DVD Drive: Mount the FreeNAS 9.10 ISO from a datastore

You may be wondering why we are adding a VMDK virtual disk and a SCSI controller to this VM if we’re doing passthrough, but we still need some kind of a boot device for FreeNAS to use. Normally in the physical world, we’d just use an 8GB or larger USB thumb drive. With a VM, the easiest way to accomplish this is to simply use a small VMDK disk and the default LSI Logic Parallel SCSI controller. Remember, this boot device has nothing to do with ZFS, so there is no issue with this.

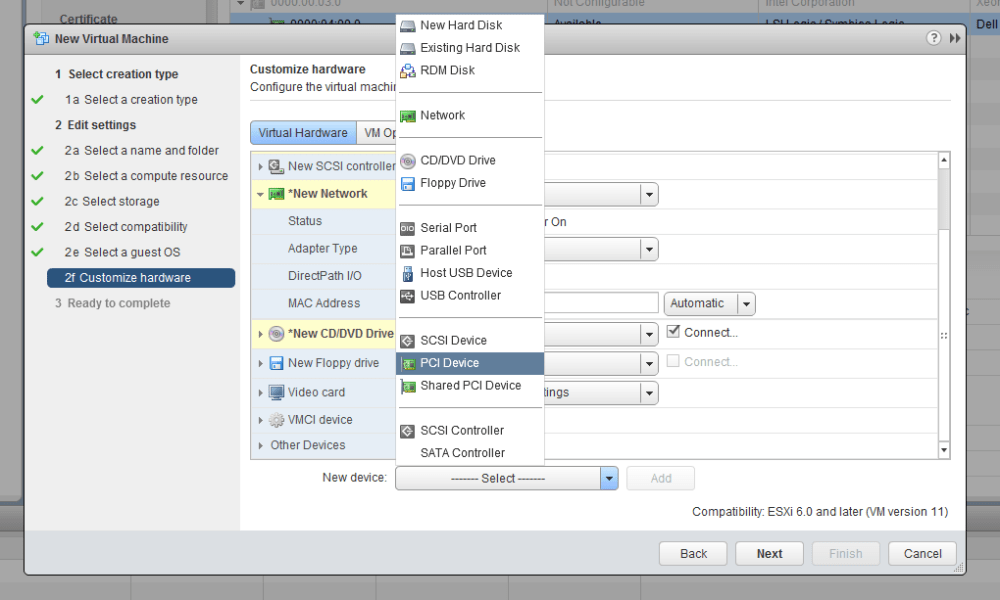

Once we have those parameters set, we’ll need to add our PCI device using the ‘New device‘ dropdown.

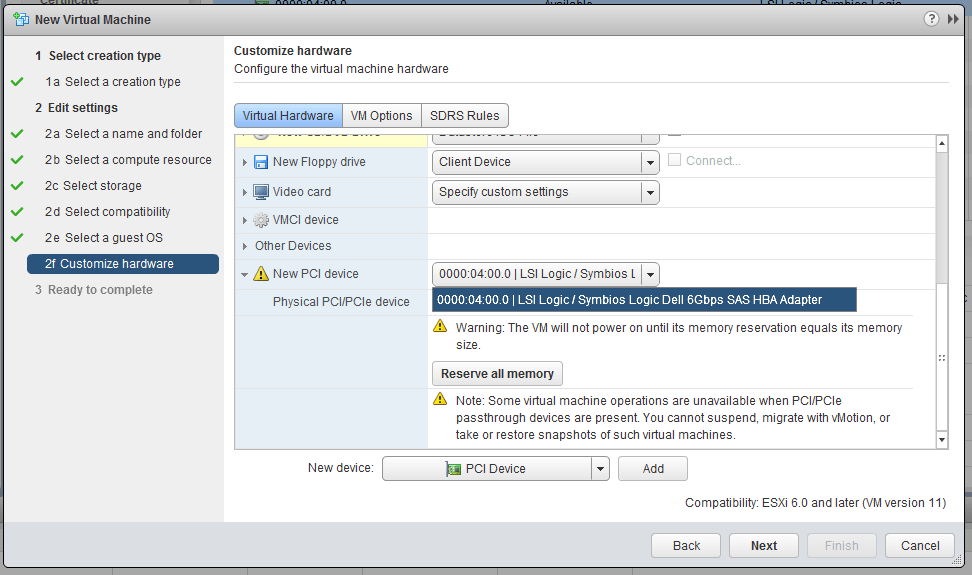

Once the PCI Device is added, we can select the Dell SAS HBA from the dropdown. As soon as this device is added, you’ll also be greeted by a warning stating that all memory for this VM must be reserved. This is not an option – the VM will not power on unless this is done. Because the VM will be using IOMMU to access the PCI device, it will need consistent and reliable access to physical memory on the host. This may or may not be a problem for you depending on the amount of RAM you have available in your lab. That said, we want this VM performing as optimally and consistently as possible so this is not a bad thing.

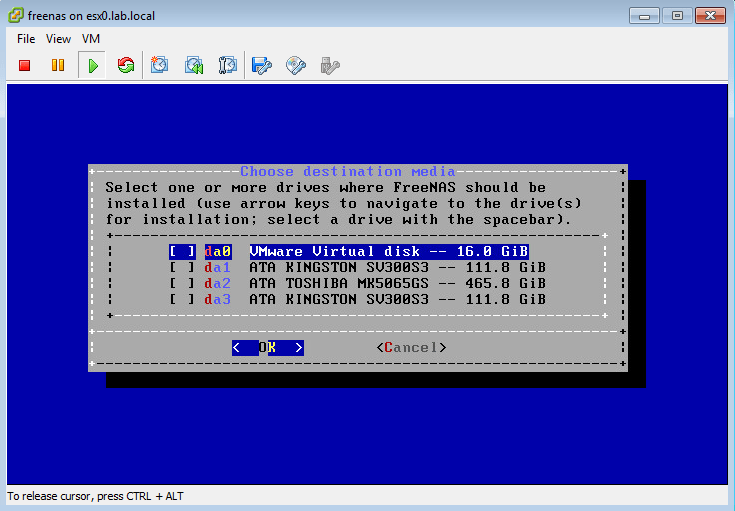

Once finished, I booted the VM via the mounted CD image. The FreeNAS installer greeted me with the following possible install devices:

I can see all three of the physical drives I connected to the PERC H200 adapter, as well as the 16GB virtual disk that I want to use as a boot partition. Clearly, passthrough is working!

Once I finished the install and went through the initial configuration I SSH’ed into the VM to take a look at the PCI device:

[root@freenas] ~# dmesg | grep LSI mps0: <Avago Technologies (LSI) SAS2008> port 0x4000-0x40ff mem 0xfd5f0000-0xfd5fffff,0xfd580000-0xfd5bffff irq 18 at device 0.0 on pci3

As you can see above, FreeNAS can clearly see an LSI SAS2008 based adapter at a specific PCI address on the system.

Although lspci is a linux tool, it does appear to work in the FreeBSD version FreeNAS utilizes. We can get some more adapter information using the verbose -v flag:

[root@freenas] ~# lspci -v <snip> 03:00.0 Serial Attached SCSI controller: LSI Logic / Symbios Logic SAS2008 PCI-Express Fusion-MPT SAS-2 [Falcon] (rev 03) Subsystem: Dell 6Gbps SAS HBA Adapter Flags: bus master, fast devsel, latency 64, IRQ 18 I/O ports at 4000 Memory at fd5f0000 (64-bit, non-prefetchable) Memory at fd580000 (64-bit, non-prefetchable) Capabilities: [50] Power Management version 3 Capabilities: [68] Express Endpoint, MSI 00 Capabilities: [d0] Vital Product Data Capabilities: [a8] MSI: Enable+ Count=1/1 Maskable- 64bit+ Capabilities: [c0] MSI-X: Enable- Count=15 Masked- <snip>

Another note of interest is to see what driver and firmware version is loaded for this device:

[root@freenas] ~# dmesg | grep “mps0: Firmware” mps0: Firmware: 20.00.07.00, Driver: 21.01.00.00-fbsd

Normally, FreeNAS would complain about firmware and driver mismatches if the 20.x firmware isn’t matched with the 20.x driver, but apparently the 21.x driver included in FreeNAS 9.10 is indeed compatible with the 20.x firmware. This definitely make sense to me given there isn’t even a P21 firmware out yet.

So there you have it! PCI passthrough works great, and is as close as you can get to a physical FreeNAS build on an ESXi host.

FreeNAS 9.10 Lab Build Series:

Part 1 – Defining the requirements and flashing the Dell PERC H200 SAS card.

Part 2 – FreeNAS and VMware PCI passthrough testing.

Part 3 – Cooling the toasty Dell PERC H200.

Part 4 – A close look at the Dell PowerEdge T110.

Part 5 – Completing the hardware build.