Today I’ll be looking at a feature I’ve wanted to examine for some time – Beacon Probing. I hope to take a fresh look at this often misunderstood feature, explore the pros, cons, quirks and take a bit of a technical deep-dive into its inner workings.

According to the vSphere Networking Guide, we see that Beacon Probing is one of two available NIC failure detection mechanisms. Whenever we’re dealing with a team of two or more NICs, ESXi must be able to tell when a network link is no longer functional so that it can fail-over all VMs or kernel ports to the remaining NICs in the team.

Beacon Probing

Beacon probing takes network failure detection to the next level. As you’ve probably already guessed, it does not rely on NIC link-state to detect a failure. Let’s have a look at the definition of Beacon Probing in the vSphere 6.0 Network guide on page 92:

“[Beacon Probing] sends out and listens for beacon probes on all NICs in the team and uses this information, in addition to link status, to determine link failure.”

This statement sums up the feature very succinctly, but obviously there is a lot more going on behind the scenes. How do these beacons work? How often are they sent out? Are they broadcast or unicast frames? What do they look like? How do they work when multiple VLANs are trunked across a single link? What are the potential problems when using beacon probing?

Today, we’re going to answer these questions and hopefully give you a much better look at how beacon probing actually works.

The Need for Beacon Probing

The default method of network failure detection in ESXi is ‘Link Status Only’. This is about as simple as it gets. If the link is up, use the adapter – if the link is down, don’t use it. This does make some rather bold assumptions though – namely that a failure of the NIC or switch would always cause the link to go down. In many cases, this assumption would be a good one, but there are numerous situations where this doesn’t happen.

A classic example of where this would be with some older blade chassis systems. Blades with multiple independent chassis switches don’t always pass link-state back to the blade NICs should one of the chassis switches go down. Also, if one of the chassis switch to physical switch uplinks fail, link state may not be correctly reflected to the blades using that link.

Another common occurrence would be a ‘firmware hang’ on a network adapter. In this scenario, the card has hung – it’s no longer able to process Ethernet frames and is basically a paperweight sitting in a PCI Express slot in the host. The switch may have correctly observed that the link has gone down, but in many cases the hypervisor doesn’t know this because the NIC driver is not able to reliably interact with the adapter firmware. So on the switch it’s link-down, but according to the host, it’s link-up. In this situation, numerous VMs and vmkernel ports would remain associated with this troubled vmnic and would most likely have lost all network connectivity.

And last but certainly not least, user error is one of the more common problems. All it takes is a network administrator incorrectly identifying a physical switch port and putting it into the wrong VLAN. In this situation, a number of VMs may no longer be able to communicate because one of the adapters is now in the wrong broadcast domain. From a low-level perspective, the link state remains up and ESXi will most certainly continue to try to use the adapter as if nothing has changed.

The Test Lab

In order to get a good feel for how beacon probing works, I’ve setup a simple lab environment using a single ESXi 6.0 host. I’ve configured three vmnics on a standard switch as follows:

![]()

As you can see, I’ve got a total of six VMs all spread across two VLANs – VLAN 7 and VLAN 8.

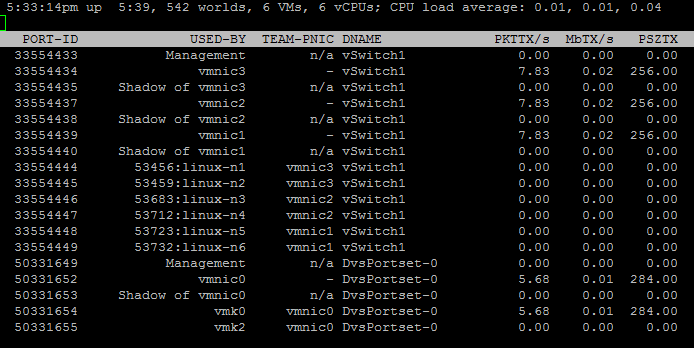

Running esxtop from an SSH session on the host, we can see the VM to physical adapter mapping. With the default ‘route based on originating virtual port ID’ load balancing method, each VM will be associated with only one available vmnic. With all six VMs powered up, we can see an even 2 to 1 ratio of VMs to vmnics on vSwitch1.

Each physical adapter is connected to a physical switch port with 802.1q VLAN trunking enabled. VLAN 7 and VLAN 8 are allowed and functional on all three ports.

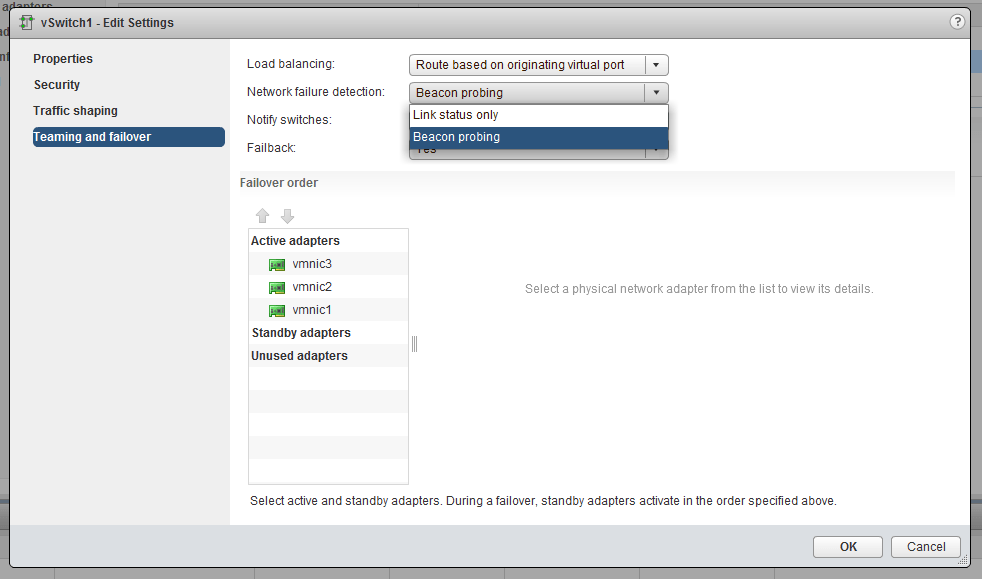

Once I had the vSwitch configured, I went ahead and enabled beacon probing.

Interestingly, this can be done at the vSwitch level, which is inherited to all portgroups by default, or on specific portgroups. The only reason you may want to do this for specific portgroups would be to failover certain VMs/VLANs in a lost probe situation, but others only if an adapter’s link state goes down. Most people would just turn it on for the entire vSwitch as I have done above.

Now, in order to take a look at the probes themselves, I’d need to do a packet capture. There are several ways this can be done, but since I’m using physical hosts, I’ll use the handy pktcap-uw tool included in ESXi 5.5 and newer releases.

Note: To simplify the capture and keep things looking organized, I shut down the VMs in VLAN 8 so that probes would be sent out on VLAN 7 only. I’ll be using VLAN 8 in a later test. Also, because pktcap-uw is unidirectional, I did two separate captures – one for each direction – and then merged them in wireshark.

Beacon Probe Frame Analysis

At this point, we’re going to take a closer look at the structure of a beacon probe—probably much closer than you’d ever care to see. Feel free to skip to the next section unless you are really curious and enjoy Ethernet frame analysis.

Before we dig into an individual frame, let’s look at the overall behavior. I was pretty surprised by the number of frames I observed in the brief capture period. In approximately 22 seconds, vmnic1 observed 178 frames.

Per the vSphere 6.0 Networking Guide:

“Sends out and listens for Ethernet broadcast frames, or beacon probes, that physical NICs send to detect link failure in all physical NICs in a team. ESXi hosts send beacon packets every second.”

I think emphasis should be on packets – plural, not singular. If I sort on the source virtual MAC of vmnic1 only, we can see the interval in which it probes the other two adapters in the team. Interestingly, every second, it sends two probes a few milliseconds apart.

I believe that this is by design. Beacon probing is sensitive to packet loss, so sending two probes at a time helps to avoid false positives because of occasional dropped frames. If I remove the filter and view all probes, you’ll see that during this time we’re seeing the same pattern of two incoming frames from the other two adapters in the team:

Another thing that you may find odd is that the beacon frames look like what you’d expect from a virtual machine or kernel port – not from a physical adapter. The MAC addresses are all containing OUIs of 00:50:56.

Taking a quick look at vmnic2 on this host for example, we can see that it has the following MAC address with an Intel OUI:

[root@esx-a1:~] esxcfg-nics -l |grep -i vmnic2 vmnic2 0000:01:00.0 igb Up 1000Mbps Full 90:e2:ba:0e:47:aa 1500 Intel Corporation 82580 Gigabit Network Connection

But in the packet captures, we can clearly see that the frames coming in from vmnic2 have a source MAC of 00:50:56:1e:47:aa. From what I can gather, the first 28 bits of the MAC address are a virtual prefix of sorts, and the remaining 22 bits are taken from the actual physical MAC of the adapter.

For probing purposes, ESXi uses what it calls the ‘Virtual MAC Address’ assigned to the physical adapter. Unfortunately, this can’t be found in the UI anywhere, but you can get it from the vsish shell from the command line:

[root@esx-a1:~] vsish -e get /net/pNics/vmnic2/virtualAddr

Virtual MAC Address {

Virtual MAC address:00:50:56:1e:47:aa

}

As you can see above, this ‘Virtual MAC’ matches what we observe in the packet captures.

So now that we can see the frequency and pattern, let’s examine a freshly captured beacon probe frame. This example is a beacon sent from vmnic1 (00:50:56:1b:1e:13) to vmnic2 (00:50:56:1e:47:aa).

At first glance, it doesn’t look like much and is relatively small at only 256 bytes in size. As you can see, these frames aren’t broadcasts, which is a common misconception.

As mentioned, these beacon probes can be easily identified by their unique ethertype identifier. An ethertype identifier is a unique four hexadecimal digit (two byte) value for each layer two protocol and governed by the IEEE. VMware’s beacon probe frame is identified as ethertype 0x8922, and appears in the IEEE’s official list of ethertypes available here.

Interestingly, the IEEE listing for ethertype 0x8922 has some additional information that will help us to decode exactly what is in the data portion of this Ethernet frame.

“Following the ethernet header, the packet contains a 4-byte magic number, a 2-byte length field, and a 1-byte type field. Additional data are type dependent. For a “beacon” packet, the data includes a unique host identifier, a sequence number, a source virtual port identifier, and the name of the physical adapter.”

Let’s see if we can decode the frame we’ve captured.

Magic Number (4-bytes): 0x026F7564

Length (2-bytes): 0x00E7 – 231 bytes in decimal

Type: (1-byte): 0x03 – Assuming this means ‘beacon probe’.

The length field seems to add up correctly. The data section is 238 bytes in total, seven of which are composed of the magic number, length and type, so 231 bytes would be the ‘type specific’ data. From what I’ve seen, the magic number is a sort of identifier and always be the same in all beacons.

According to VMware’s IEEE listing for this protocol type, beacon probes would contain additional data, including the host’s UUID, a sequence number and the virtual port identifier. Let’s see if we can identify any of these in the data portion of the frame.

First, let’s start with the system UUID. I could determine my host’s ID using the following command:

[root@esx-a1:~] esxcfg-info |grep "System UUID" <snip> |----System UUID..............................................58fa49ef-9323-62e9-6104-90e2ba0e47aa

![]()

Interestingly, the system UUID was in the probe not once, but twice. I’m not clear what the purpose of it being listed twice is, but this information is useful determine which host this probe came from.

Next, let’s see if we can find the port identifiers. The easiest way to determine which local vSwitch port used by the uplinks is to use the net-stats command:

[root@esx-a1:~] net-stats -l PortNum Type SubType SwitchName MACAddress ClientName 33554434 4 0 vSwitch1 90:e2:ba:0e:47:ab vmnic3 33554437 4 0 vSwitch1 90:e2:ba:0e:47:aa vmnic2 33554439 4 0 vSwitch1 00:25:90:0b:1e:13 vmnic1 <snip>

Converting each of these values into hexadecimal gives us the following:

vmnic1: 0x2000007

vmnic2: 0x2000005

vmnic3: 0x2000002

In the same frame, we can clearly see that the source vmnic port number is indeed contained in the data:

![]()

What I found interesting, however, is that one of the two probes sent from vmnic1 to vmnic2 during the same one second period included both the source and destination port numbers, the first included only the source. Below is the second frame that I captured about 20ms later:

![]()

I’m not sure where exactly I’d find the “names of the adapters” per the IEEE description, but I can definitely take a guess as to where the sequence number can be found:

![]()

Looking at the frames chronologically, I can see that the above highlighted 24 bit field increments as time goes on. I can only assume that this is the sequence number, or a portion of the sequence number.

I’m actually surprised that I found as much as I did in the frame. There is little else in the data field besides zero padding.

So that’s probably way more than you ever wanted to know about a 0x8922 Ethernet frame, but understanding the content of the frame can help us to better understand how beacon probing actually works.

Testing Failover

And now for the fun part. Let’s have a look at how beacon probing behaves during a failure scenario. To simulate this, I’ll simply remove VLAN 7 or VLAN 8 on the physical switch. This will essentially mean that the vmnic is down without actually being link state down.

To begin, I simply removed VLAN 7 from the physical port associated with vmnic 3. As shown in an earlier screenshot, both linux-n1 and linux-n2 VMs were both using this adapter.

Within a few seconds of watching in esxtop, I saw both virtual machines get reassigned to other uplinks. In this case, both made their way over to vmnic2.

To my surprise, I saw no alerts, alarms or warnings trigger in the vSphere Web Client or the leacy client. Let’s have a look at vobd.log to see if anything registered:

[root@esx-a1:~] cat /var/log/vobd.log |grep -i vmnic3 <snip> 2017-06-17T19:40:21.274Z: [netCorrelator] 28004257834us: [vob.net.pg.uplink.transition.down] Uplink: vmnic3 is down. Affected portgroup: VLAN 7. 2 uplinks up. Failed criteria: 32 2017-06-17T19:40:23.002Z: [netCorrelator] 28005986635us: [esx.problem.net.redundancy.degraded] Uplink redundancy degraded on virtual switch "vSwitch1". Physical NIC vmnic3 is down. Affected port groups: "VLAN 7"

Notice above that the VLAN 7 portgroup is specifically called out as impacted. This is because I only removed VLAN 7 and not VLAN 8. Beacon probes are still successfully being exchanged in VLAN 8’s broadcast domain.

Now let’s see what happens when we remove VLAN 8 from the same switch port.

2017-06-17T19:55:08.989Z: [netCorrelator] 28891973146us: [vob.net.pg.uplink.transition.down] Uplink: vmnic3 is down. Affected portgroup: VLAN 8. 2 uplinks up. Failed criteria: 32 2017-06-17T19:55:10.000Z: [netCorrelator] 28892984383us: [esx.problem.net.redundancy.degraded] Uplink redundancy degraded on virtual switch "vSwitch1". Physical NIC vmnic3 is down. Affected port groups: "VLAN 8"

As you can see, this time we see VLAN 8 reported as an ‘affected port group’. Because no VMs in VLAN 8 were using vmnic3, no failover actions were initiated.

So now let’s look at what happens when we trigger the failure of another adapter in the team. I’ll remove VLAN 7 from the switch port where vmnic2 is connected next, but will leave VLAN 8 in place.

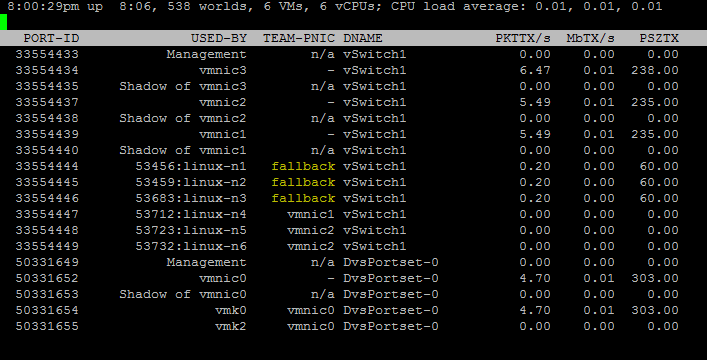

Almost immediately, we see three of the six VMs go into what’s called ‘fallback’ mode and are no longer associated with a specific vmnic. Notice that because there are still two of three NICs functional on VLAN 8, linux-n4, linux-n5 and linux-n6 are still assigned to specific adapters. Because only one of three NICs are now functional on VLAN 7, ESXi can no longer determine which NIC is actually broken.

Let’s have a look in vobd.log and see what happened:

2017-06-17T19:59:53.020Z: [netCorrelator] 29176004381us: [vob.net.pg.uplink.transition.down] Uplink: vmnic2 is down. Affected portgroup: VLAN 7. 1 uplinks up. Failed criteria: 32 2017-06-17T19:59:53.096Z: [netCorrelator] 29176080332us: [vob.net.pg.uplink.transition.down] Uplink: vmnic1 is down. Affected portgroup: VLAN 7. 0 uplinks up. Failed criteria: 32 2017-06-17T19:59:55.002Z: [netCorrelator] 29177986144us: [esx.problem.net.connectivity.lost] Lost network connectivity on virtual switch "vSwitch1". Physical NIC vmnic1 is down. Affected port groups: "VLAN 7" 2017-06-17T19:59:55.002Z: [netCorrelator] 29177986406us: [esx.problem.net.redundancy.lost] Lost uplink redundancy on virtual switch "vSwitch1". Physical NIC vmnic2 is down. Affected port groups: "VLAN 7"

We see the usual message about affected port groups, but we also see that ESXi reported vmnic1 as being down for VLAN 7. Remember, I never removed VLAN 7 from the switch port connected to vmnic1. This is basically ESXi’s way of saying all three adapters are in a questionable state now and beacon probing is no longer able to tell which adapter is good, and which is bad.

Shotgunning Traffic

I’m not totally sure where the term ‘shotgunning’ traffic came from, but images come to mind of a double barrel shotgun blasting packets out both barrels simultaneously 🙂

Now that we have VLAN 7 in fallback mode, I want to see if we can observe this effect occurring. In theory, ESXi can no longer distinguish good links from bad in VLAN 7 and should be ‘shotgunning’ VM and vmkernel traffic out all three NICs simultaneously in the hope that some will make it to their destination.

To test this, I’ll run a continuous ping from the linux-n1 virtual machine to its default gateway. While this is happening, I’ll run three packet captures simultaneously:

[root@esx-a1:~] pktcap-uw --uplink vmnic1 --proto 0x01 --dir 1 -o /tmp/vmnic1-dir1.pcap & pktcap-uw --uplink vmnic2 --proto 0x01 --dir 1 -o /tmp/vmnic2-dir1.pcap & pktcap-uw --uplink vmnic3 --proto 0x01 --dir 1 -o /tmp/vmnic3-dir1.pcap &

The above capture basically looks at only outgoing packets (dir 1) and packets with the IP protocol identifier of 0x01, which is ICMP. I’ll be capturing frames leaving vmnic1, vmnic2 and vmnic3 simultaneously. If I’m correct, I should see the same outgoing ICMP packets on all three adapters.

And there you have it. Every single ICMP echo request is sent out three times. You can see this above by observing the duplicate ICMP sequence numbers.

In this particular failure scenario, this is not actually a problem. Because we have a genuine issue with VLAN 7 on vmnic2 and vmnic3, these ICMP packets will never actually make it very far on the network. The upstream switch would drop them and only the ICMP packets leaving vmnic1 would actually make it to the 172.16.7.1 gateway router.

Why Three or More NICs?

This is really the question that comes up repeatedly on beacon probing, and it can take some time to wrap one’s head around the reasoning. To help to illustrate why shotgunning occurs, I’ve put together the following diagrams. The first is a basic flow of beacon probes in a simple topology.

![]()

I’ve put a third switch in the topology to show the type of scenario beacon probing was designed to work with and to show a failure scenario where the link state would remain up on the vmnics. As you can see above, both vmnics are receiving beacons from the other vmnic, and all is well.

But what if we have a failure between pSwitchX and pSwitch2? The link state to the ESXi host would remain up because the switch port connecting to vmnic2 wasn’t impacted.

![]()

Now we have a situation where beacons are still being sent from both NICs, but the ESXi host knows that something is wrong because no beacons are being received on either NIC. The issue here is that ESXi has no idea where the breakdown is, and which link is the problem. For all ESXi knows, both links may be the issue.

Since there is a chance that one of these two NICs still works, ESXi goes into ‘fallback’ mode and forwards duplicate frames out both NICs simultaneously as we saw in the previous section.

But what if we are using three adapters?

![]()

Clearly, there are more beacons on the wire, but each of the three adapters should be sending out beacons and receiving beacons from two other adapters. Let’s look at a similar failure scenario where the link between pSwitchX and pSwitch2 has gone down:

![]()

ESXi now has more information to work with and can make a proper decision. Both vmnic1 and vmnic3 are still receiving beacons from each other, so ESXi can determine that those two NICs are still good. Because vmnic2 hasn’t received any beacons, it’s determined to be bad and is taken out of service.

Even though ESXi is no longer using vmnic2 for VM or kernel traffic, it does continue to send out beacon probes and will continue to listen for them on the wire. As soon as probes begin to be received and other adapter’s receive vmnic2’s probes again, the adapter will be put back into service.

Beacon Probing Frequently Asked Questions

Q: How quickly does a failover occur when using Beacon Probing?

A: Pretty quickly. In the VLAN test I did above, it took roughly 3-4 seconds before I saw uplink reassignment occur.

Q: Can I use Beacon Probing with ‘Route Based on IP Hash’ or LACP load balancing?

A: No. The hashing algorithms used by these load balancing methods will be applied to beacon frames as well. This will result in beacons leaving unpredictable adapters in the team and can lead to false positives and other oddities. Don’t try it – it won’t work properly.

Q: I have enabled beacon probing, but don’t see any beacons in my packet capture. Why?

A: ESXi will only begin sending out and listening for beacons when something on the host is connected to a portgroup/vswitch with beacon probing enabled. This helps to reduce unnecessary traffic on the wire – especially if a lot of VLANs are configured but not all used. As soon as a VM is connected to a portgroup/vswitch with it enabled, beacons will immediately begin to flow.

Q: Does ESXi still use link state information for failover when beacon probing is enabled?

A: Yes. As outlined in the vSphere Network guide, beacon probes as well as link state information is used to make failover decisions. Link state will continue to be the primary method used. If a NIC’s link goes down, the card is no longer used because it can’t possibly function in a link down state.

Q: If beacons are lost on only one VLAN, will all of my VMs on that adapter fail over or only the ones associated with the troubled VLAN?

A: ESXi sends and monitors beacon probes on each layer-2 broadcast domain (VLAN) independently. When probes fail on only one VLAN, ESXi considers that portgroup an ‘affected portgroup’ and fails over any VMs on that adapter on that VLAN only. VMs in other functional VLANs will continue to use the adapter.

Q: Do I really need to use a minimum of three network adapters with beacon probing?

A: Not necessarily. Beacon probing with work with as few as two adapters. But, as mentioned earlier, if a failure occurs, ESXi can’t reliably determine which network adapter has gone down without a minimum of three adapters in a NIC team. If the link-state is up on both adapters when beacons ceased, ESXi will ‘shotgun’ duplicate frames out both adapters.

Q: What’s the point of using three adapters when I have only two redundant switch fabrics?

A: This is one of the key points that makes the ‘three adapter’ recommendation pretty weak. If one switch or stack went down, you could potentially have two of three adapters down anyway. Any false positive because of that switch fabric would also result in shotgunning out all three adapters. Using four adapters, two to each switch fabric would actually be a better recommendation.

Q: Are Beacon Probes L2 broadcast frames?

A: This is actually a very common misconception. Beacon frames are always L2 unicast frames addressed to the assigned virtual MAC address of the other adapters in the team.

Q: In a packet capture, my beacon probes are coming from MAC addresses that don’t exist on my ESXi host. Why?

A: As mentioned in the frame analysis section above, ESXi uses what’s referred to as the ‘Virtual Address’ associated with the physical adapter. This will usually be a 24-bit 00:50:56 VMware OUI value, followed by a ‘1’ and then the remaining portion of the actual physical MAC. For example, a physical MAC of AA:AA:AA:AA:AA:AA would usually translate to 00:50:56:1A:AA:AA.

Closing Thoughts and My Personal Opinion

Although Beacon Probing does work, I tend to think of it as a legacy feature that’s outlived its usefulness. Today, NIC firmware and drivers have matured quite a bit and blade systems are a lot better at reporting link state correctly. We also have features like ‘VLAN/MTU Health Check’ that can help to detect and alert on VLAN inconsistencies.

As we saw, beacon probing dumps a lot of L2 traffic onto the wire, and that increases as the number of VLANs and number of hosts increase. There is no denying that there is an overhead cost associated with this feature.

And then of course there is the elephant in the room – the shotgunning effect. Using three or more NICs may not be a realistic expectation for many people. If you experience packet loss or a false-positive for some reason, you are going to see a ton of duplicate frames on the wire. Depending on the applications you use, this could be a serious problem and a risk not worth introducing.

On the flip-side, if you have NICs that are prone to firmware hangs, switches that don’t always go link-down or older blade systems that are flaky in that regard, beacon probing may save you from outages.

In a nut shell, beacon probing is disabled by default for a reason. My simple advice is to leave it turned off unless you have a good reason to turn it on.

Holy sh**! Ohh how little do I know… while I am not a networking person per se, I am teaching vSphere stuff since almost a decade now. Never knew THAT much detail about Beacon Probing, mostly that it’s not used as nobody knows about it (or cares?) – thanks for that awesome input! Even it won’t be of too much use most likely, but that happens to knowledge frequently ;).

Get yourself a decent drink, you earned it. If we ever should meet in person, the next one is on me!

BR from Germany

Steffen

Great stuff, thank-you

This is exactly the type of explanation I needed to understand what was going on in my network. Extremely detailed and well written. Thank you!

And I note.

Q: Can I use Beacon Probing with ‘Route Based on IP Hash’ or LACP load balancing?

A: No. The hashing algorithms used by these load balancing methods will be applied to beacon frames as well. This will result in beacons leaving unpredictable adapters in the team and can lead to false positives and other oddities. Don’t try it – it won’t work properly.

Aaaahhhhhhhhhhhhhh

THank you for this. I was very confused why 3 or more nics would be needed. I only have 2 10gbps nics, one to each switch (which are interconnected to each other) for NFS storage. I have 2 other nics but they both go to two core networking switches that have the ability to do mlag, so active/active. The storage switches not so much. So I was looking for a way to use HA to power on machines on antother host if storage is lost on one esxi host due to the nic still reporting it is up (thanks to the blade chassis connection), but the SFP actually not shooting light, so on the switch end it shows interface down.

very well explained, thanks for write up

I ran across this post while investigating why network kept dropping during a switch reboot.

You mention a “classic example” of older blade chassis with independent switches not passing link-state back to the blades. I couldn’t find anything mentioning this behavior for Dell or any other chassis. Do you have documentation on this?

Tank you very much for this article. It’s very useful to have it, because beacon probing KB refers to post from 2008.