ARP suppression is one of the key fundamental features in NSX that helps to make the product scalable. By intercepting ARP requests from VMs before they are broadcast out on a logical switch, the hypervisor can do a simple ARP lookup in its own cache or on the NSX control cluster. If an ARP entry exists on the host or control cluster, the hypervisor can respond directly, avoiding a costly broadcast that would likely need to be replicated to many hosts.

ARP Suppression has existed in NSX since the beginning, but it was only available for VMs connected to logical switches. Up until NSX 6.2.4, the DLR kernel module did not benefit from ARP suppression and every non-cached entry needed to be broadcast out. Unfortunately, the DLR – like most routers – needs to ARP frequently. This can be especially true due to the easy L3 separation that NSX allows using logical switches and efficient east-west DLR routing.

Despite having code in the 6.2.4 and later version DLRs to take advantage of ARP suppression, a large number of deployments are likely not actually taking advantage of this feature due to a recently identified problem.

VMware KB 51709 briefly describes this issue, and makes note of the following conditions:

“DLR ARP Suppression may not be effective under some conditions which can result in a larger volume of ARP traffic than expected. ARP traffic sent by a DLR will not be suppressed if an ESXi host has more than one active port connected to the destination VNI, for example the DLR port and one or more VM vNICs.”

What isn’t clear in the KB article, but can be inferred based on the solution is that the problem is related to VLAN tagging on logical switch dvPortgroups. Any dvPortgroup associated with a logical switch with a VLAN ID specified is impacted by this problem.

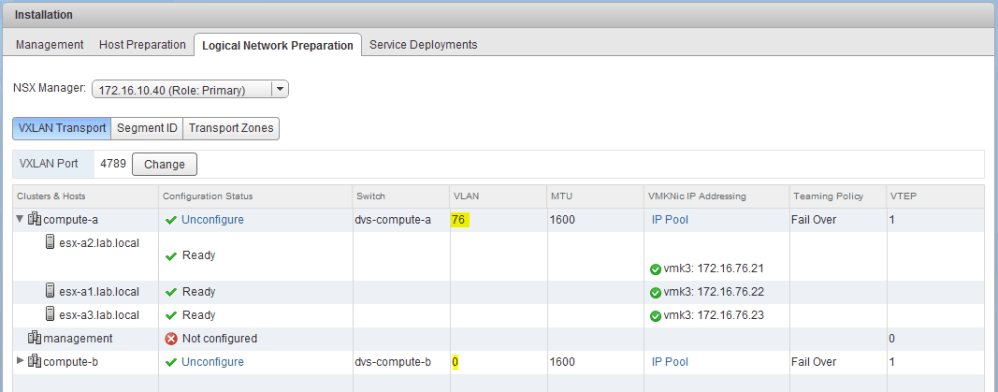

This needs some further explanation. As you are probably aware, during host preparation, we have the opportunity to specify a VLAN for VXLAN VTEP creation. For example, in my lab, you can see that cluster compute-a is prepared with VLAN ID 76 for the VXLAN underlay networking:

I also have another cluster called compute-b, which is configured with VLAN 0. Although VLAN 0 is not a valid VLAN ID, it’s simply another way of setting it to ‘None’ in NSX and in some places in vSphere as well. In this case, no VLAN tag would be added to any frames sent by the VTEPs. In my lab environment, compute-b hosts would benefit from DLR ARP suppression, but compute-a would not.

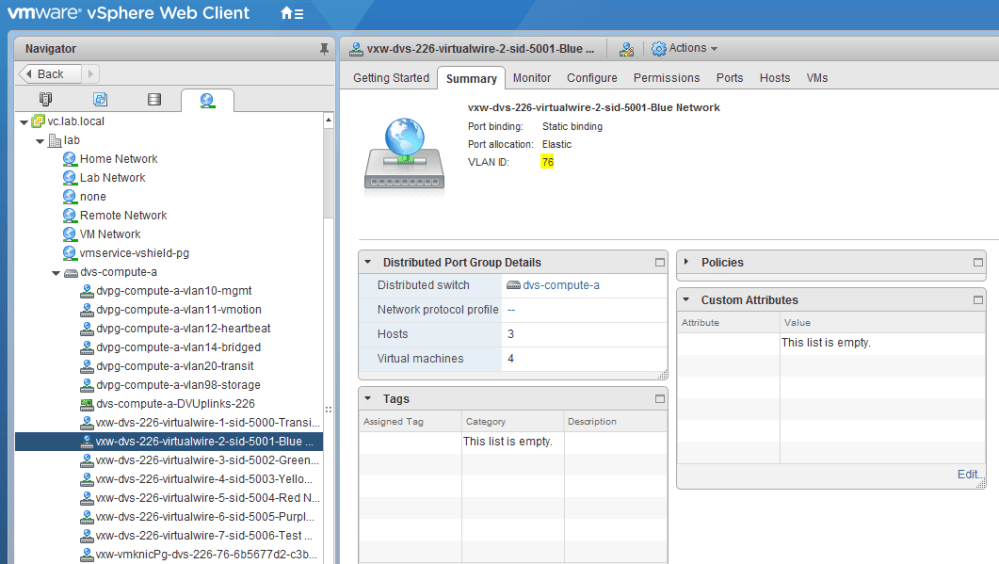

As clusters are added to the transport zones, you’ll notice that dvPortgroups will be created in a 1:1 ratio with logical switches that are configured in the environment. Each of these portgroups will have a name starting with vxw-dvs-xxx-virtualwire, where ‘xxx’ is the moref identifier of the distributed switch. Below is an example called vxw-dvs-226-virtualwire-2-sid-5001-Blue Network:

As you can see above, the VTEP VLAN ID configured during host preparation is automatically inherited for each of these generated dvPortgroups.

Simply put, if you specified a VLAN during VXLAN preparation, your logical switch dvPortgroups will have that VLAN associated with them and you won’t benefit from DLR ARP suppression. Due to the issue in the code, ARP suppression is simply skipped for DLR LIFs associated with these non-zero VLAN logical switches.

A Functional Workaround

VMware has plans to correct this in a future release of the product, but for now, a simple workaround is possible. Although it’s normally not recommended to modify logical switch portgroups in any way, the VLAN ID associated with these portgroups has no bearing whatsoever on their functionality. All that matters is that the correct VLAN ID is set for the vmw-vmknicPg portgroup where the VTEP vmkernel ports reside. That said, we can modify any logical switch vxw-dvs portgroups to remove their VLAN ID.

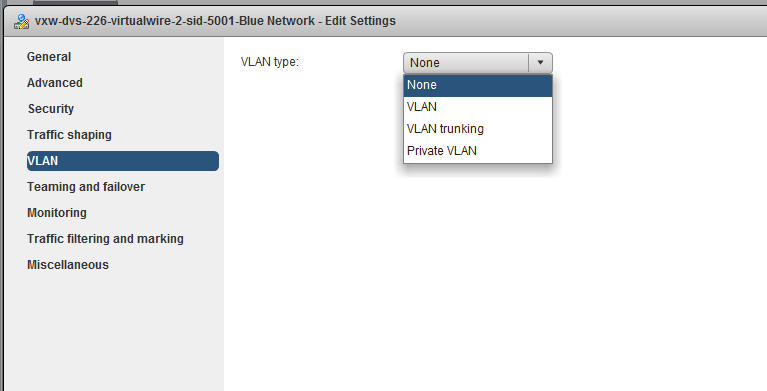

This can be done in the VLAN section of the ‘Edit Settings’ dialog for your logical switch portgroups but setting VLAN type to ‘None’:

If you’ve got a large number of logical switches, you could probably script this pretty easily using PowerCLI. Again, whatever you do, be very careful not to modify the vxw-vmknicPg portgroup. Changing the VLAN ID to None here would most likely cause a dataplane outage!

Short of doing packet captures, there are a few things you can do to see if the workaround is functional. First, you will probably notice that the total number of ARP queries to the control cluster has increased. Keep an eye on this counter prior to making the change and again after:

nsx-controller # show control-cluster logical-switches vni-stats 5001 update.member 23 update.vtep 27 update.mac 92 update.mac.invalidate 16 update.arp 10996 update.arp.duplicate 0 query.mac 211 query.mac.miss 0 query.arp 43 query.arp.miss 8

What we’re most interested in is the query.arp counter. This would be incremented for lookups against the controller for ARP suppression purposes. Since the DLR generally does a lot of ARP, this should increment more quickly.

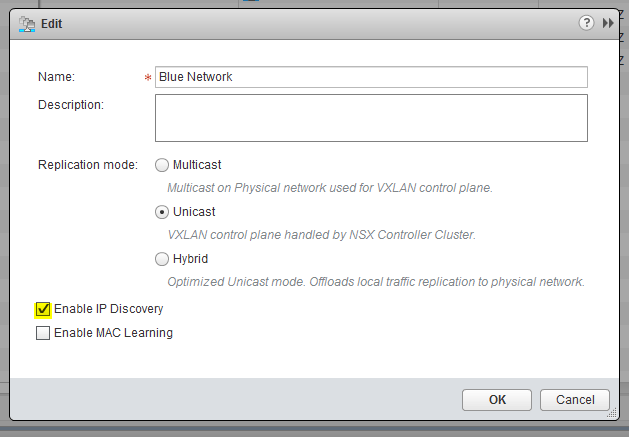

And of course, none of this will work unless ARP suppression is enabled on your logical switches. This is enabled by default, but if things aren’t working as expected you may want to double check:

Ensure ‘Enable IP Discovery’ is checked for your logical switches.

The only unfortunate thing about this workaround is that any newly created logical switches will be created with the VTEP VLAN ID again. If any logical switches are re-created or added, you’ll need to apply the workaround to them. Once VMware fixes this issue, you won’t need to worry about this.

Final Thoughts

Since the DLR simply behaved as it did prior to 6.2.4 when using VLAN tags for VTEPs, most people really haven’t noticed this issue. It’s only if you’ve got a very large deployment that really requires suppression for scalability that this becomes a problem. Keeping ARP broadcasts off the logical switches is ideal, but it’s really up to you whether or not you want to apply the workaround or to simply wait for a fix in a future release.

Another thing to consider is that there is another problem with DLR ARP that can result in longer than usual resolution time. This would apply to ARP broadcasts, not suppression. VMware outlines this other issue in KB 2151374. Thankfully this is already fixed in 6.3.4 and 6.2.9. If you happen to be using an older version, this could be even more incentive to use the workaround outlined above. If ARP suppression is successfully utilized, it will significantly cut down the amount of traditional ARP broadcasts needed to be done, and will avoid the delay associated with this other problem.

Have any questions or want more information? Please leave a comment below or reach out to me on Twitter (@vswitchzero)