One of the best features of the DFW is the flexibility it provides in using objects in rules instead of IP addresses or groups of IP addresses. For example, for a source/destination you could use a VM in the inventory, a cluster or a security group containing all sorts of dynamic criteria. Underneath all of this, however, NSX needs to be able to inspect segment and packet headers to enforce the rules. These headers are only going to contain identifying information like IP addresses and TCP ports so it must keep track of which object is associated with which IP address or addresses. And because of the ‘distributed’ nature of the DFW, each of these translations must ultimately reach the ESXi hosts for enforcement.

There are three ways in which NSX can associate IPs with VMs – VMware Tools reporting, ARP snooping and DHCP snooping. The latter two are disabled by default.

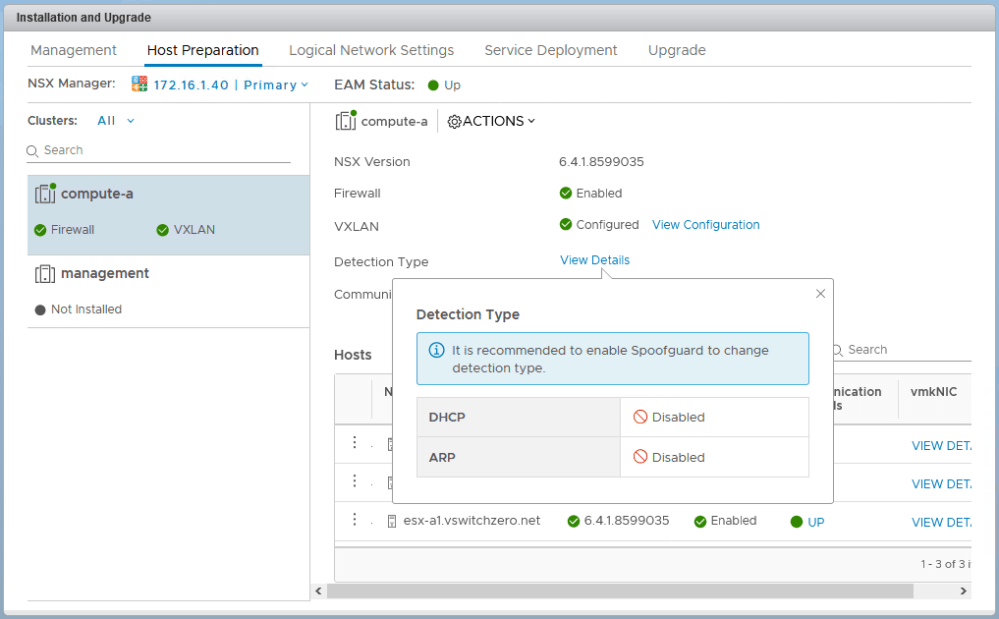

In recent builds of NSX, you can see the detection types enabled in the host preparation section. As can be seen above, DHCP and ARP snooping are disabled by default leaving only VMware Tools address reporting.

VMware Tools Reporting

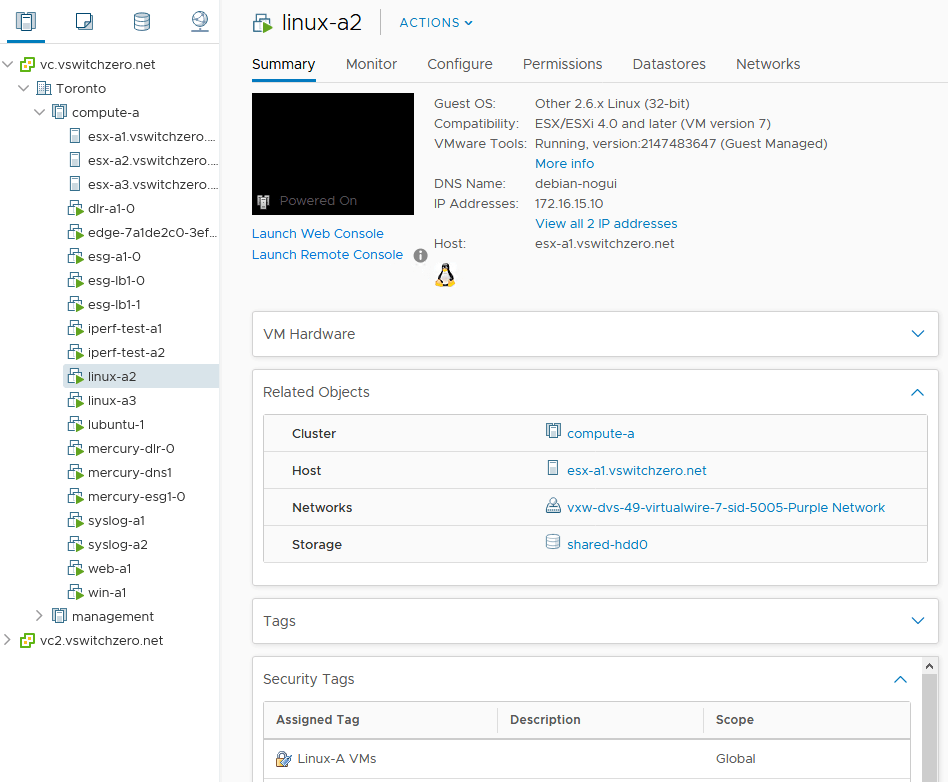

As you have probably noticed, VMs with VMware Tools installed conveniently report their configured IP addresses in the vSphere Client.

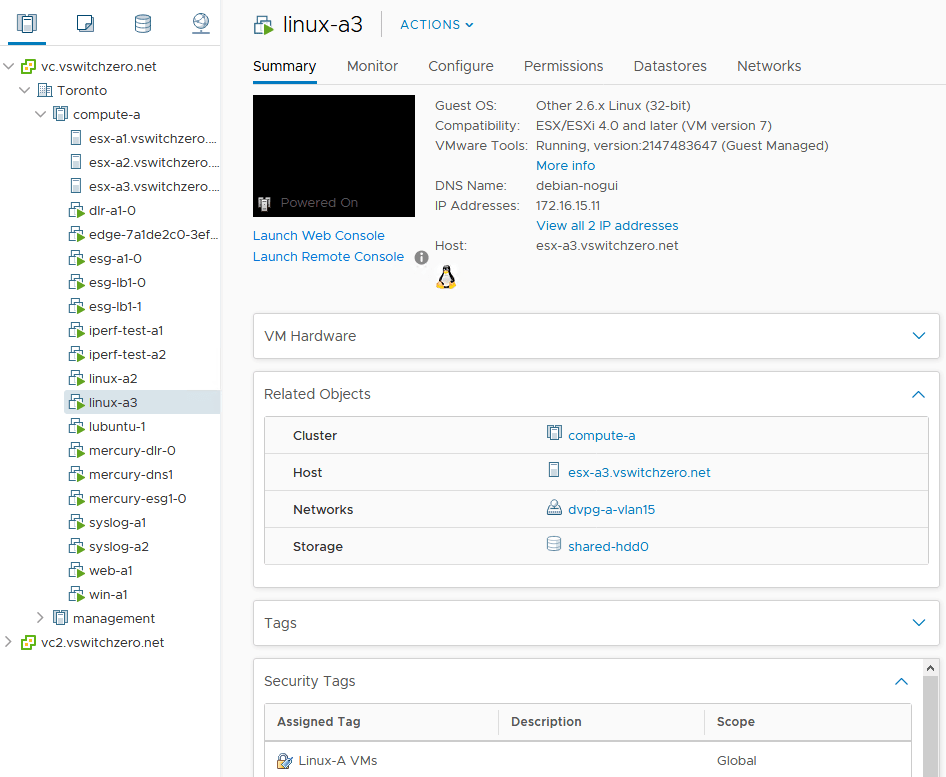

Virtual machine linux-a2 is reporting 172.16.15.10 as well as an IPv6 address on the summary tab in the vSphere Client. This information comes from VMware Tools and will be recorded in the NSX Manager database. Whenever we use a rule that references the VM linux-a2, NSX will look up this IP address for rule enforcement. These rules could contain a parent object, like the cluster compute-a, or a security group, a logical switch – anything that linux-a2 belongs to.