There are many different approaches you can take when building a VMware home lab, but I always prefer to do custom-build tower systems. This allows me to select the components I want – the fans, heatsinks, PSU – to keep the noise to a minimum. Even though I keep the lab in the basement, I really don’t like to hear it running. I don’t need the density that a rack provides, and I’m quite happy to have my nearly silent towers humming along on a wire shelf instead. Not to mention that lower noise usually equates to lower power consumption and of course, it keeps my wife happy as well – the most critical metric of all.

I finally got around to upgrading my three ancient compute nodes recently. I have been using the included Dynatron R13 1U copper heatsinks that came with the motherboards I bought on eBay. They work, and keep the CPUs relatively cool, but as you can imagine, noise was not a key consideration in their design. They are as compact and as efficient as possible at only an inch tall. At idle, the Supermicro X9SRL-F keeps them at a low RPM, but put any load on the systems and you’re quickly at 7500RPM and the noise is pretty unbearable. No problem for a datacenter, but not for a home lab.

Today I’ll be taking a bit of a departure from my regular posts and will be doing an in-depth review of two high-end Noctua heatsinks. Noctua was kind enough to send me a review sample of not one, but two of their Xeon heatsinks – the NH-U9DX i4 and the NH-D9DX i4 3U.

Noctua

Noctua is an Austrian company well known for their low noise fans and high-end heatsinks. I’ve been using Noctua heatsinks for ages. In fact, I reviewed some of their original heatsinks and fans many years ago when I used to write hardware reviews. This included their original NH-U12P, the NH-C12P and the smaller NH-U9B. Back then, I praised them for their high-quality construction, near silent operation, excellent mounting hardware and most importantly – excellent cooling performance. That was over ten years ago, and it seems that Noctua is still very well respected for all the same reasons today.

Noctua is an Austrian company well known for their low noise fans and high-end heatsinks. I’ve been using Noctua heatsinks for ages. In fact, I reviewed some of their original heatsinks and fans many years ago when I used to write hardware reviews. This included their original NH-U12P, the NH-C12P and the smaller NH-U9B. Back then, I praised them for their high-quality construction, near silent operation, excellent mounting hardware and most importantly – excellent cooling performance. That was over ten years ago, and it seems that Noctua is still very well respected for all the same reasons today.

Their gear has always been pricey compared to the competition, but when it comes to Noctua, you get what you pay for.

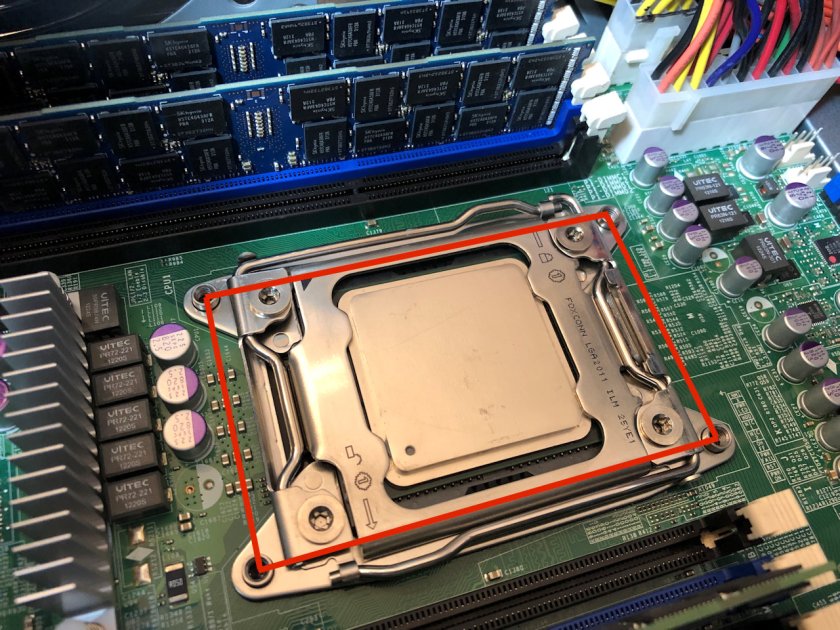

Square vs Narrow ILM LGA 2011

One of the challenges I had with my new/used Supermicro X9SRL-F boards is that they don’t use the common ‘square’ LGA2011 mounting pattern. Instead, they use what’s referred to as ‘Narrow ILM’ that allows the socket to be more rectangular in shape. This allows more real estate for memory slots and other components on the board. Because of this, I was severely limited in my heatsink choices. Most of what’s available out there for Narrow ILM are rack mount heatsinks similar to what I’m hoping to remove. The majority of the consumer-grade stuff for LGA2011 simply won’t fit.

Here you can see the Dynatron R13 narrow ILM heatsink mounted:

Continue reading “Noctua NH-U9DX i4 and NH-D9DX i4 3U Heatsink Review”